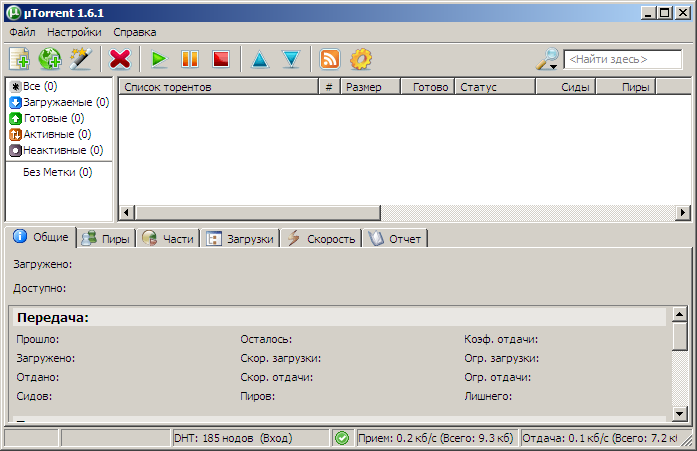

(Each error with a different peer and not all on the same torrents) "Disconnect: Peer error: The system cannot find the file specified."Īnd the problem seems to be growing more severe!Įven though uTorrent has trouble maintaining 20 connected peers across 1000's of torrents.I'm often seeing this error message repeated 5 times per second. Using uTorrent, I'm also trying to keep seeded a long list of torrents that only have about 1 active peer per 100+ torrents.īut I'm having trouble doing that because peers tend to download nothing and disconnect after about 36 seconds with this error message: I'm mainly using uTorrent v3.5.5 latest builds on an old Win 7 Pro computer for BitTorrent-related tests, as uTorrent has extensive error logging and graphing. Would you mind running another test with with the latest version of #3543 applied? I made some improvements to the logging as well. What does your transport protocol do when a packet is lost? How much packet loss do you experience? So, I think the question is: why is the burst of packet loss spread out over multiple windows? How can congestion be detected earlier, before we run off the cliff and trigger multiple packets to be lost. The problem in general to use large UDP packets, and to rely on fragmentation, is that any packet loss will become extremely expensive, as dropping a single fragment will toss the whole UDP packet. It seems pretty clear that the main problem is the packet loss. I committed the fix to the pull request I posted earlier: #3543 (specifically, this I doubt that using jumbo packets would make a significant difference right now. The deferred ACK logic in libtorrent appears to have deferred the ACK a bit too much, making uT have a hard time growing its congestion window. the drop in UDP Package size that we see in the libTorrent The good news is that I'm pretty sure I figured out what was wrong with downloading from uTorrent. I know that these are two fundamentally different protocols - just offering some thoughts regd. If I were to cap the size of these packages to 10000 - then I would get very slow speeds. Notice that the application uses large UDP packages (close to max size). When I run our Filemail Desktop application on the exact same server in Sydney which I am testing libTorrent on - and transfer files to the same pc in Norway - I get a transfer speed of over 4 megabytes per sec. It should be at 65000 (max) or at least close to it at all times - at least for this transfer (given latency / package drop scenario). t_upload_mode(true) //No effect?īased on my experience from other UDP based transfer (acceleration) protocols I think it's very detrimental that the UDP package size is reduced all the way down to 10000 at times. Lt::torrent_handle newHandle =s->add_torrent(apr) Sp.set_str(settings_pack::listen_interfaces, string("0.0.0.0:") + to_string(Config::TorrentPort)) Īpr.flags |= lt::add_torrent_params::flag_super_seeding //ONĪpr.flags |= lt::add_torrent_params::flag_seed_mode //ONĪpr.flags &= ~lt::add_torrent_params::flag_auto_managed //OFFĪpr.ti = std::make_shared(pathToTorrentFile) Sp.set_bool(settings_pack::enable_outgoing_tcp, true)

Sp.set_bool(settings_pack::enable_outgoing_utp, true) Sp.set_int(settings_pack::connections_limit, 10000)

Sp.set_int(settings_pack::unchoke_slots_limit, 999999) Sp.set_bool(settings_pack::allow_multiple_connections_per_ip, true) S = make_unique(lt::fingerprint("LT", LIBTORRENT_VERSION_MAJOR, LIBTORRENT_VERSION_MINOR, 0, 0)) So lowering the gain factor could be a useful test as well. I suspect that by the time the first packet loss is detected, the cwnd has grown too large, and more packets will be lost soon (but apparently not soon enough, as it happens more than one window out, or my patch is wrong). It defaults to 3000 bytes, which is roughly twice as fast as TCP, which uses one segment, i.e. The closer the measured delay gets to the target, the smaller the effective gain will be. Setting the target delay to 1s (from the default 100ms) could have a small impact as it makes the "delay factor" smaller, and the small delay that is detected has much less impact on throttling.Īnother important setting is utp_gain_factor, which is specified as number of bytes per ACK (at 0 delay). Would you mind sharing what you've set the utp_* settings to? However, it looks like you've tweaked the uTP parameters, or at least the target delay. The problem is that the cwnd pulls back a lot more than cut in half when there's packet loss (or times 0.78 as I updated in that patch). It's not the sawtooth itself that't the problem, it's expected when there's no buffer delay.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed